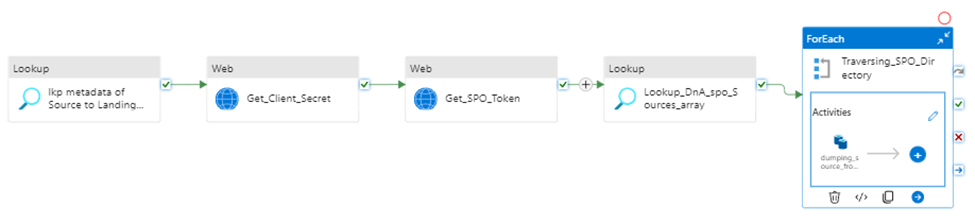

In this article I will provide a fully Metadata driven solution about using Azure Data Factory (ADF) or Synapse Analytics (ASA) incrementally copy multiple data sets one time from SharePoint Online (SPO) then sink them to ADSL Gen2.

Previously, I have published articles regarding ADF or ASA working with SPO. if you are interested in specifics matters, please look at related articles from here , or email me at william . chen @ mainri.ca (please remove spaces from email account 🙂 ).

Scenario and Requirements

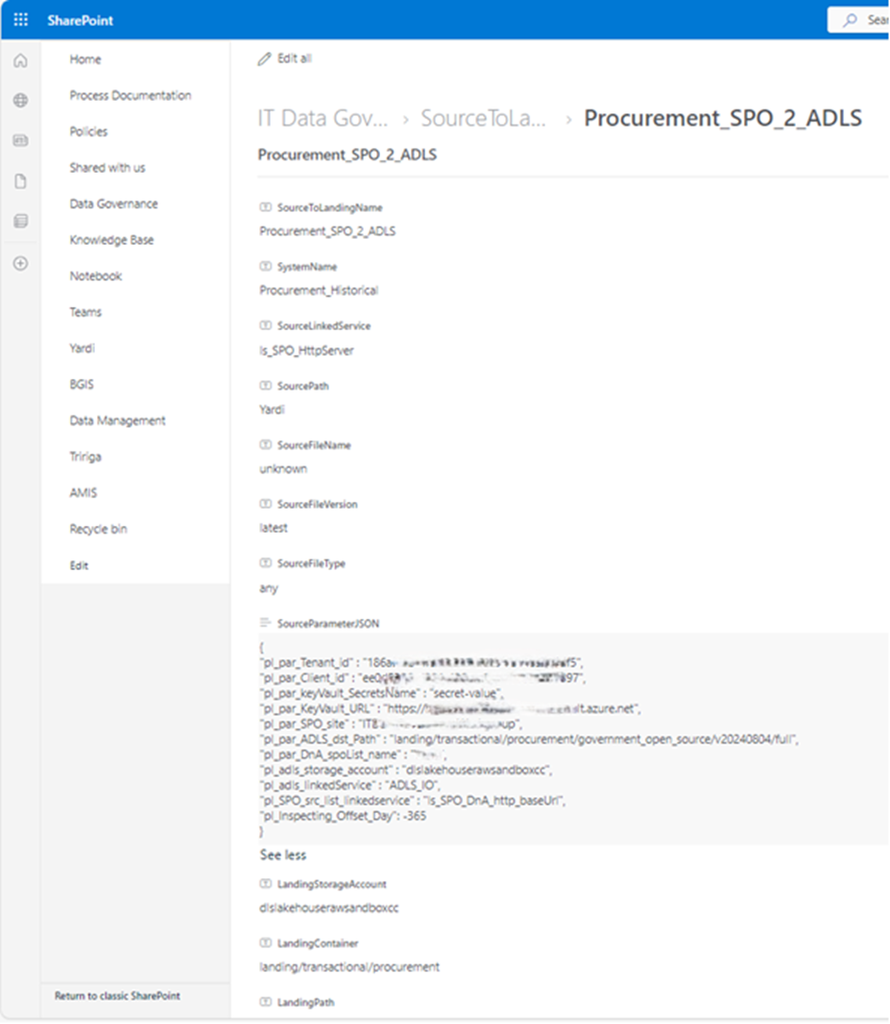

- Metadata driven. All metadata are save on SharePoint list, such as TenantID, ClientID, SPO site name, ADLS account, inspecting offset days for increment loading …. etc.

- Initial full load, then monitor data set status, once it update, incrementally load, for example, on daily basis.

Solution

Retrieves metadata >> retrieves “client Secret” >> request access token >> insect and generate interests data list >> iteratively copy interest data sink to destination storage.

Prerequisite

- Register SharePoint (SPO) application in Azure Active Directory (AAD).

- Grant SPO site permission to registered application in AAD.

- Provision Resource Group, ADF (ASA) and ADLS in your Azure Subscription.

I have other articles to talk those in detail. If you need review, please go to

- Register an application ID on Azure Entra ID (former Active Directory)

- SharePoint Online grants ADF or ASA access site to extract data

Let us begin , Step by Step in detail, I will mention key points for every steps.

Step1:

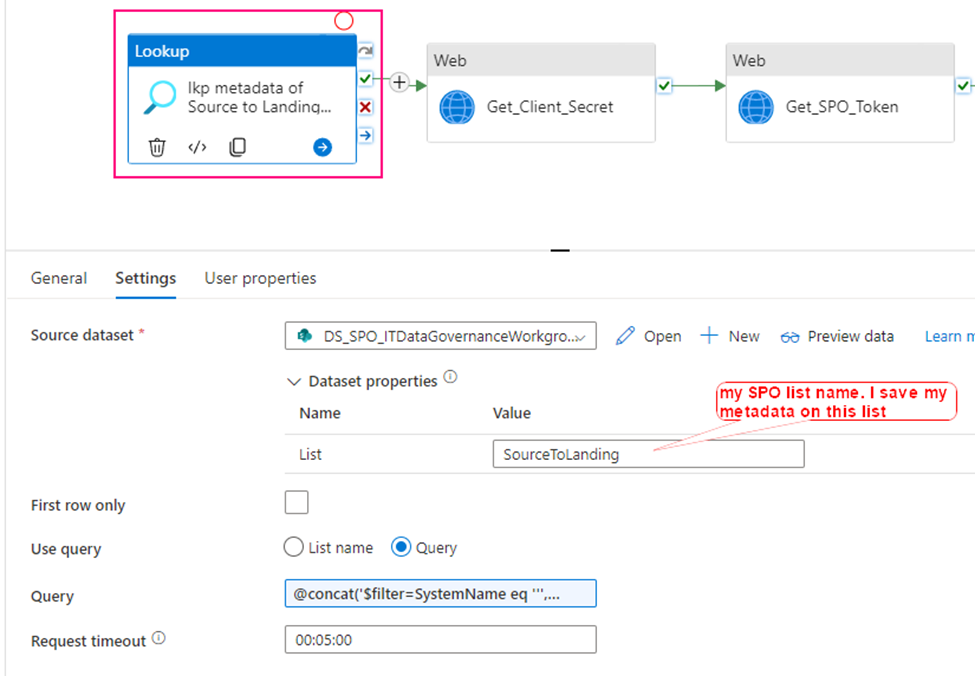

Using Lookup activity to retrieve all metadata from SharePoint Online

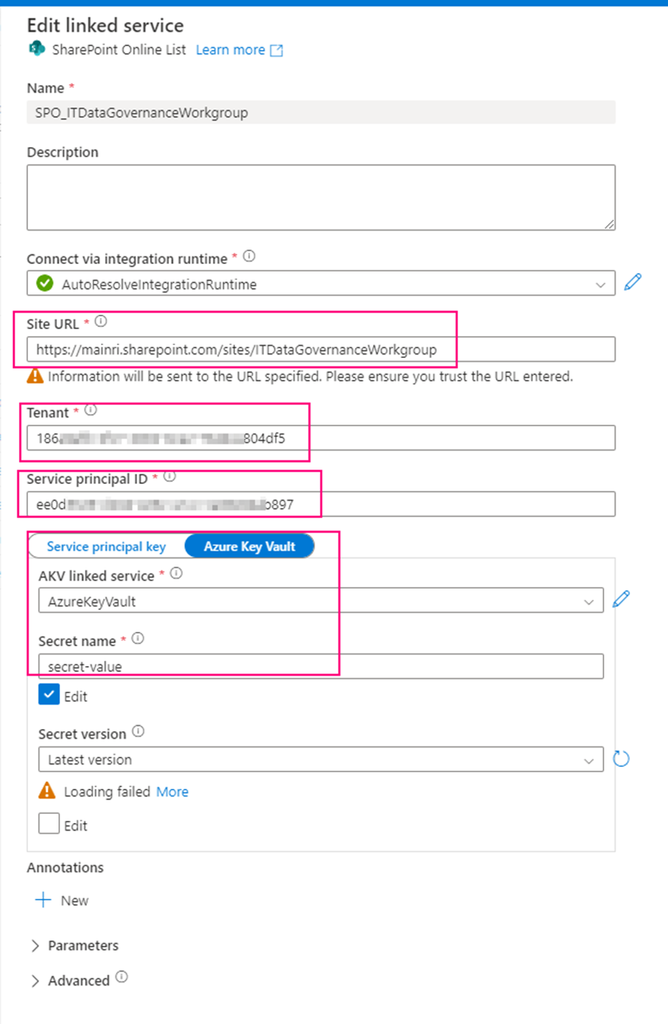

Firstly, create a SharePoint on Line List type Linked Service – SPO_ITDataGovernanceWorkgroup you need provide:

- SharePoint site URL, you should replace it by using yours. it looks like htts://[your_domain].sharepoint.sites/[your_site_name].

mine is

https://mainri.sharepoint.com/sites/ITDataGovernanceWorkgroup. - Tenant ID. The tenant ID under which your application resides. You can find it from Azure portal Microsoft Entra ID (formerly called Azure AD) overview page.

- Service principal ID (Client ID) The application (client) ID of your application registered in Microsoft Entra ID. Make sure to grant SharePoint site permission to this application.

- Service principal Key The client secret of your application registered in Microsoft Entra ID. mine is save in Azure Key Vault

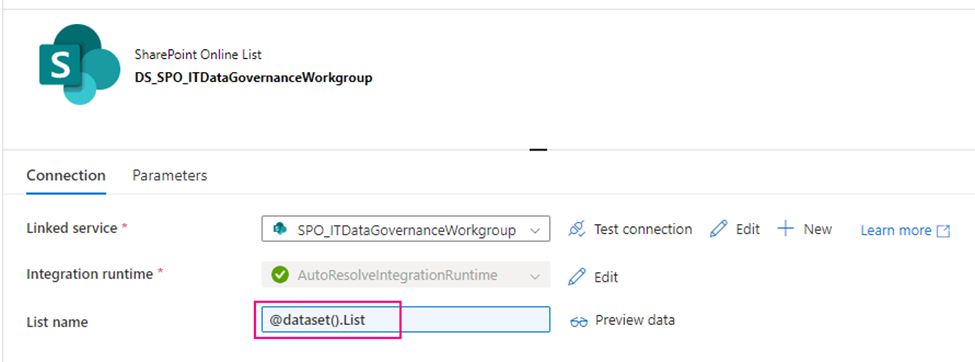

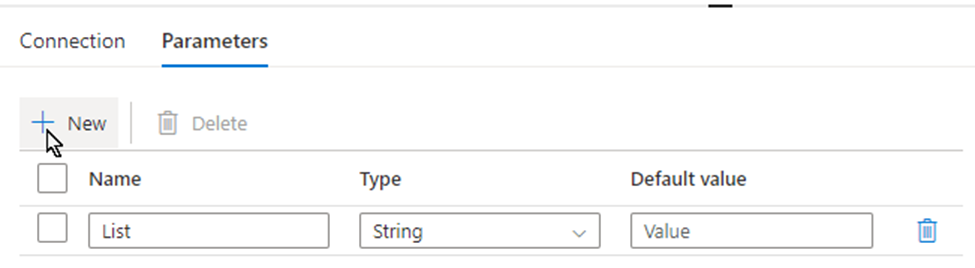

secondly, using above Linked Service create a SharePoint type Dataset – DS_SPO_ITDataGovernanceWorkgroup

parametrize the dataset.

I name the parameter “List“, This parameter lets lookup activity knows where your metadata resides. (mine is called SourceToLanding)

Now, we are ready to configure the Lookup activity

Source dataset: use above created dataset – DS_SPO_ITDataGovernanceWorkgroup

Query: @concat(‘$filter=SystemName eq ”’,pipeline().parameters.System,”’ and StatusValue eq ”Active”’)

This query filters out my interest SPO list where my metadata saves. My metadata list in SPO looks like this

This lookup activity return metadata retrieved from SPO list. Looks like this.

{ “count”: 1, “value”: [ { “ContentTypeID”: “<*** … ***>”, “Title”: “Procurement_SPO_2_ADLS”, “ColorTag”: null, “ComplianceAssetId”: null, “SystemName”: “Procurement_Historical”, “SourceLinkedService”: “ls_SPO_HttpServer”, “SourcePath”: “Y***i”, “SourceFileName”: “unknown”, “SourceFileVersion”: “latest”, “SourceFileType”: “any”,

“SourceParameterJSON”: “{\n\”pl_par_Tenant_id\” : \”< TenantID>\”,\n\”pl_par_Client_id\” : \”<clientID>\”, \n\”pl_par_keyVault_SecretsName\” : \”secret-value\”,\n\”pl_par_KeyVault_URL\” : \”https://**.vault.azure.net\”,\n\”pl_par_SPO_site\” : \”<your SPO Site>\”,\n\”pl_par_ADLS_dst_Path\” : \”landing/transactional/procurement/government_open_source/v20240804/full\”,\n\”pl_par_DnA_spoList_name\” : \”<listName>\”,\n\”pl_adls_storage_account\” : \”<ADLS account>\”,\n\”pl_adls_linkedService\” : \”ADLS_IO\”,\n\”pl_SPO_src_list_linkedservice\” : \”ls_SPO_DnA_http_baseUrl\”,\n\”pl_Inspecting_Offset_Day\”: -365\n}“,

“LandingStorageAccount”: “<storageAccount>”, “LandingContainer”: “<contenter name>”, “LandingPath”: “landing/procurementRaw”, “StatusValue”: “Active”, “Id”: 68, “ContentType”: “Item”, “Modified”: “2024-08-07T16:31:27Z”, “Created”: “2024-08-06T11:18:50Z”, “CreatedById”: 130, “ModifiedById”: 130, “Owshiddenversion”: 14, “Version”: “14.0”, “Path”: “/sites/<SPO siteName>/Lists/Source to Landing” } ], “effectiveIntegrationRuntime”: “AutoResolveIntegrationRuntime (Canada Central)”, “durationInQueue”: { “integrationRuntimeQueue”: 51 } }

response is json format.

My “SourceParameterJSON” value is string, but it well match json format, could be covert to json.

Step 2:

Get Client Secret

To get SPO access token, we need: Tenant ID, Client ID, and Client Secret

Tenant ID:

You can get this from Azure Entra ID (former Azure active Directory)

Client ID:

When you register your application at Azure Entra ID, azure Entra ID will generate one, called Application ID (Client ID). You can get this from Azure Portal >>Entra ID

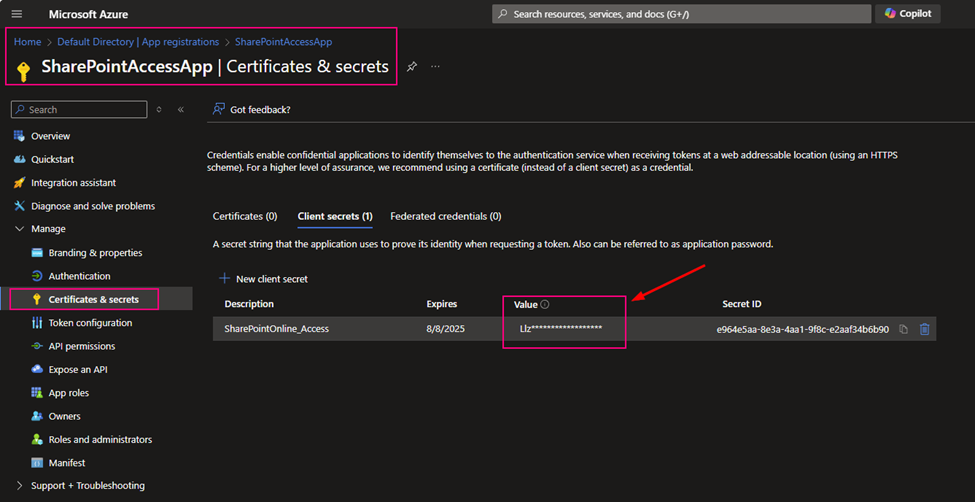

Client Secret:

After you registered your application at Azure Entra ID, you build a certificate secret from Azure Entra ID, and you immediately copied and kept that value. The value will not reappear after that process anymore.

If you are not sure how to register an application at Azure Entra ID, please review my previous article. Register an application ID on Azure Entra ID (former Active Directory)

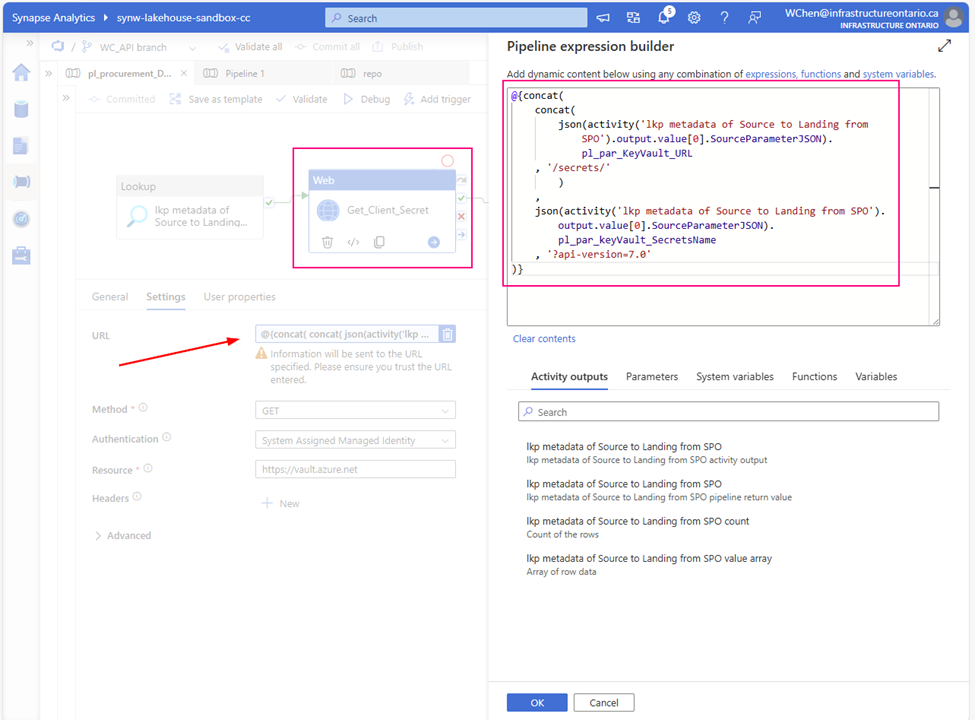

As I mentioned above, my client Secret is saved in azure Key-vault. To get Client Secret, use Web Activity to retrieve Client Secret (if you have the client Secret on hand, you can skip this activity, directly move to next activity Get SPO Token)

Method: GET

Authentication: System Assigned Managed Identity

Resource: https://vault.azure.net

URL:

@{concat(

concat(

json(activity(‘lkp metadata of Source to Landing from SPO’).output.value[0].SourceParameterJSON).pl_par_KeyVault_URL

, ‘/secrets/’

)

, json(activity(‘lkp metadata of Source to Landing from SPO’).output.value[0].SourceParameterJSON).pl_par_keyVault_SecretsName , ‘?api-version=7.0′

)}

Attention:

from above URL content, you can see. this SourceParameterJSON section matches well with json format. BUT it is NOT json, it is a string, so I convert the string to json.

?api-version=7.0 is another key point. You must add to your URL

Returns:

{

“value”: “<masked>”,

“id”: “https://<your key-vault url>.vault.azure.net/secrets/secret-value/< masked >”, “attributes”: { “enabled”: true, “exp”: 1747847487, “created”: 1716311530, “updated”: 1716311530, “recoveryLevel”: “Recoverable” }, “tags”: {}, “ADFWebActivityResponseHeaders”: { “Pragma”: “no-cache”, “x-ms-keyvault-region”: “canadacentral”, “x-ms-request-id”: “66422e67-8ffa-4d4b-92a0-48e726e843e2”, “x-ms-keyvault-service-version”: “1.9.1652.1”, “x-ms-keyvault-network-info”: “conn_type=Ipv4;addr=20.175.210.194;act_addr_fam=InterNetwork;”, “X-Content-Type-Options”: “nosniff”, “Strict-Transport-Security”: “max-age=31536000;includeSubDomains”, “Cache-Control”: “no-cache”, “Date”: “Fri, 09 Aug 2024 12:22:21 GMT”, “Content-Length”: “290”, “Content-Type”: “application/json; charset=utf-8”, “Expires”: “-1” }, “effectiveIntegrationRuntime”: “AutoResolveIntegrationRuntime (Canada Central)”, “executionDuration”: 1, “durationInQueue”: { “integrationRuntimeQueue”: 73 }

}

Step 3:

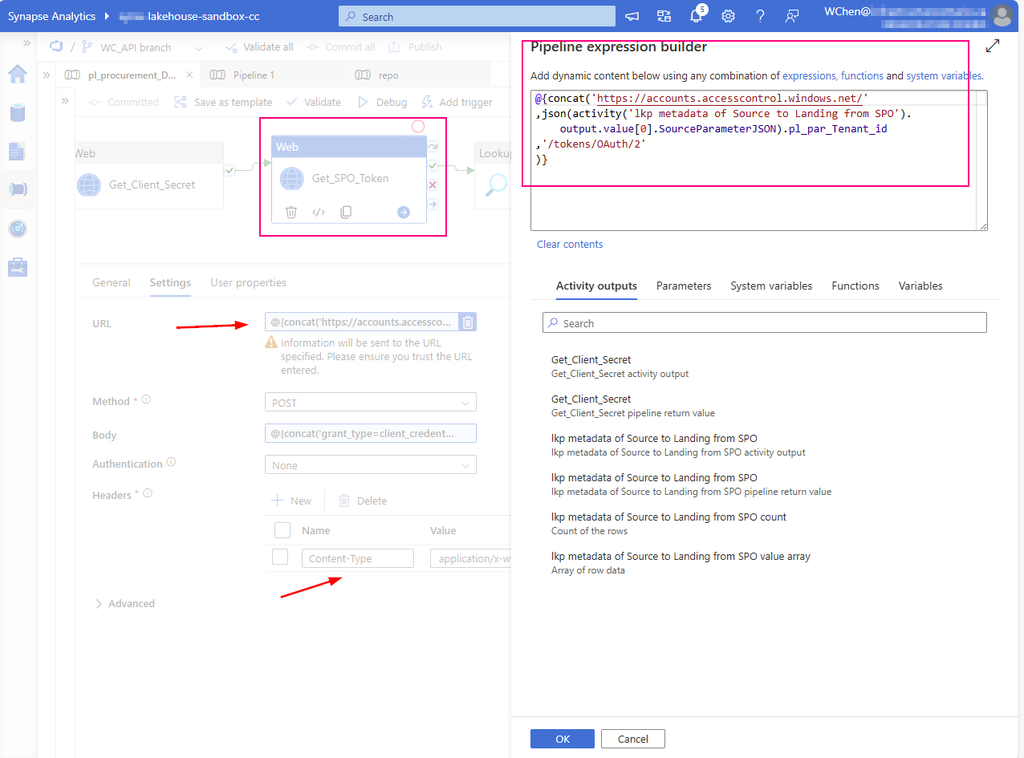

Create a Web Activity to get the access token from SharePoint Online:

URL: https://accounts.accesscontrol.windows.net/[Tenant-ID]/tokens/OAuth/2.

Replace the tenant ID.

mine looks :

@{concat(‘https://accounts.accesscontrol.windows.net/’ ,json(activity(‘lkp metadata of Source to Landing from SPO’).output.value[0].SourceParameterJSON).pl_par_Tenant_id ,’/tokens/OAuth/2′ )}

Method: POST

Headers: Content-Type: application/x-www-form-urlencoded

Body:

grant_type=client_credentials&client_id=[Client-ID]@[Tenant-ID]&client_secret=[Client-Secret]&resource=00000003-0000-0ff1-ce00-000000000000/[Tenant-Name].sharepoint.com@[Tenant-ID]

Replace the client ID (application ID), client secret (application key), tenant ID, and tenant name (of the SharePoint tenant).

mine looks:

@{concat(‘grant_type=client_credentials&client_id=’ , json(activity(‘lkp metadata of Source to Landing from SPO’).output.value[0].SourceParameterJSON).pl_par_Client_id , ‘@’ , json(activity(‘lkp metadata of Source to Landing from SPO’).output.value[0].SourceParameterJSON).pl_par_Tenant_id ,’&client_secret=’ , activity(‘Get_Client_Secret’).output.value , ‘&resource=00000003-0000-0ff1-ce00-000000000000/infrastructureontario.sharepoint.com@’ , json(activity(‘lkp metadata of Source to Landing from SPO’).output.value[0].SourceParameterJSON).pl_par_Tenant_id )}

Attention:

As mentioned at Step 2 , pay attention to json convert for section “SourceParameterJSON”

Return:

{ “token_type”: “Bearer”, “expires_in”: “86399”, “not_before”: “1723205846”, “expires_on”: “1723292546”, “resource”: “00000003-0000-0ff1-ce00-000000000000/<your domain>.sharepoint.com@<your TenantID >”,

“access_token”: “<masked>”,

“ADFWebActivityResponseHeaders”: { “Pragma”: “no-cache”, “Strict-Transport-Security”: “max-age=31536000; includeSubDomains”, “X-Content-Type-Options”: “nosniff”, “x-ms-request-id”: “ce821ffd-8a0e-463b-b2c1-b0efccdd7500”, “x-ms-ests-server”: “2.1.18662.4 – EUS ProdSlices”, “X-XSS-Protection”: “0”, “Cache-Control”: “no-store, no-cache”, “P3P”: “CP=\”DSP CUR OTPi IND OTRi ONL FIN\””, “Set-Cookie”: “fpc=<masked>”, “Date”: “Fri, 09 Aug 2024 12:22:25 GMT”, “Content-Length”: “1453”, “Content-Type”: “application/json; charset=utf-8”, “Expires”: “-1” }, “effectiveIntegrationRuntime”: “AutoResolveIntegrationRuntime (Canada Central)”, “executionDuration”: 0, “durationInQueue”: { “integrationRuntimeQueue”: 0 } }

Step 4:

Look up interest items and generate a list array pass to downstream activities to proceed. e.g. copy!

At this step, we are looking up what data we want to copy. For example,

- The latest version,

- Modified date,

- Item’s type,

…

etc.

you can filter out from SPO item property.

Previously, I have talked the filter in detail in another article. You can review it. ADF or ASA lookup filter Modified date query for SharePoint Online List

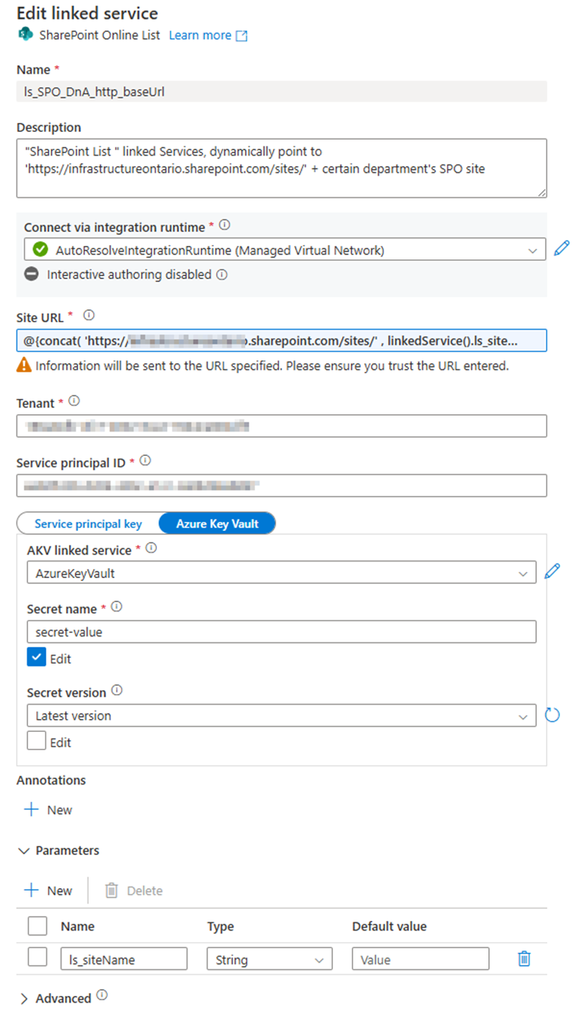

1. Create a Linked Service, type SharePoint list.

Called: “ls_SPO_DnA_http_baseUrl”, Parameteriz the Linked Service

Provide:

- SharePoint site URL

Pattern: https://<your domain>.sharepoint.com/sites/<SPO site Name>

Replace <your domain> and <SPO site Name>

Mine looks:

https://mainri.sharepoint.com/sites/dataengineering - Tenant ID

- Service principal (or says clientID, applicationID)

- Client secret value

we have discussed the TenantID, ClientID and Client secret at “step 1”, please scroll up to see or review my previous related article “Register an application ID on Azure Entra ID (former Active Directory)” - Parameter: ls_siteName

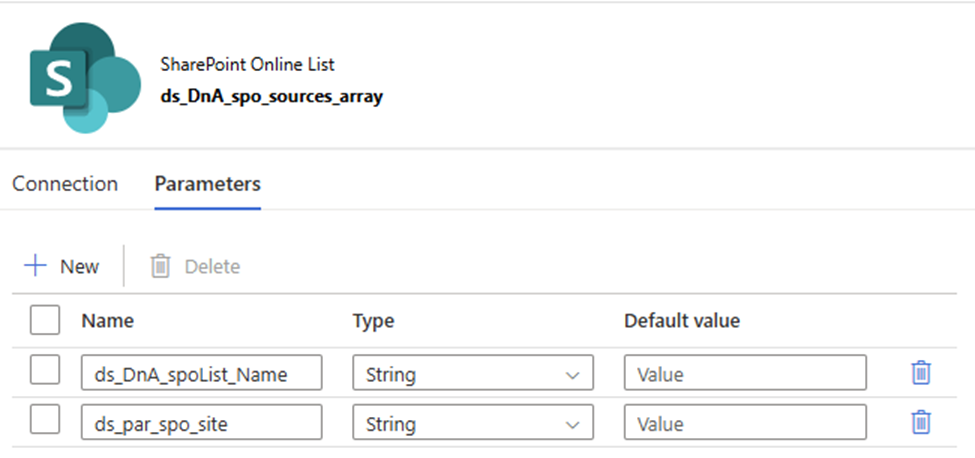

2. Create a SharePoint List type Dataset, Called “ds_DnA_spo_sources_array” and parameteriz the dataset.

Linked service: ls_SPO_DnA_http_baseUrl (you just created)

Parameter:

- ds_DnA_spoList_Name

- ds_par_spo_site

The dataset looks

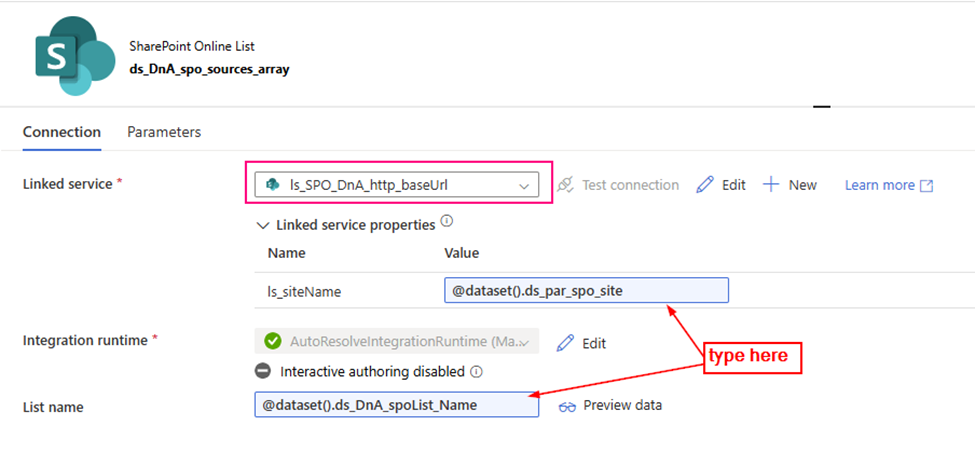

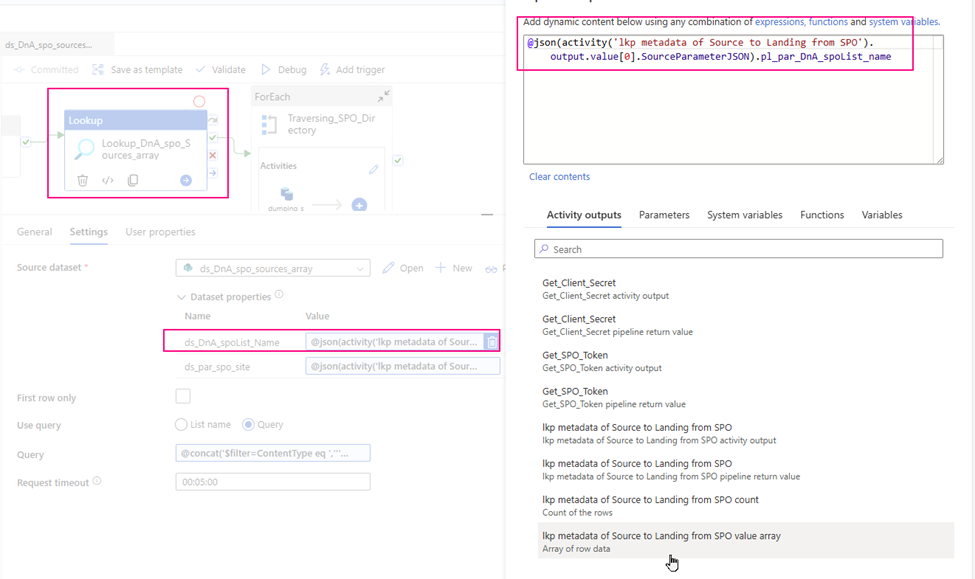

3. configure the Lookup Activity

Use up steam activities return results to configure lookup activity.

- source dataset: ds_DnA_spo_sources_array

- Dynamic content: ds_DnA_spoList_Name:

@json(activity(‘lkp metadata of Source to Landing from SPO’).output.value[0].SourceParameterJSON).pl_par_DnA_spoList_name

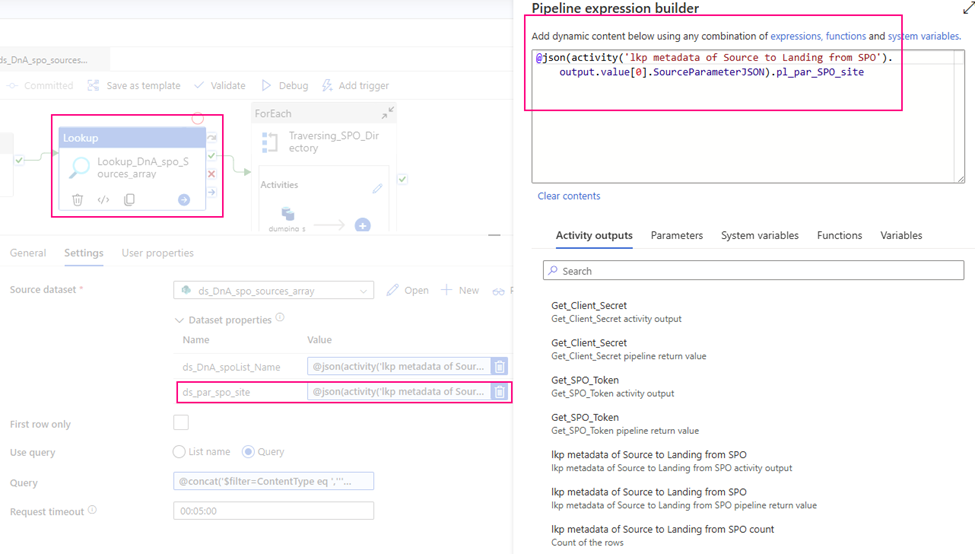

- ds_par_spo_site:

@json(activity(‘lkp metadata of Source to Landing from SPO’).output.value[0].SourceParameterJSON).pl_par_SPO_site

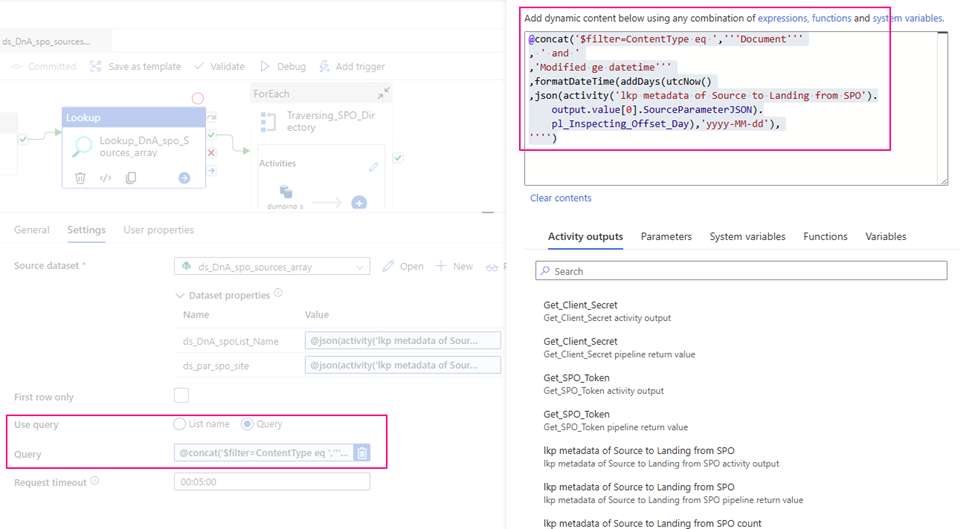

- Query:

I incrementally ingest data, so I use query to filter out interest items only.

@concat(‘$filter=ContentType eq ‘,”’Document”’ , ‘ and ‘ ,’Modified ge datetime”’ ,formatDateTime(addDays(utcNow() ,json(activity(‘lkp metadata of Source to Landing from SPO’).output.value[0].SourceParameterJSON).pl_Inspecting_Offset_Day),’yyyy-MM-dd’), ””)

Return a json array looks like:

{ “count”: 35, “value”: [ …. { “ContentTypeID”: “0x010100EEF491B910D69C439928C661FB186B23”, “Name”: “Yardi_Synapse_Integration_Doc.xlsx”, “ComplianceAssetId”: null, “Title”: null, “Description”: null, “ColorTag”: null, “Id”: 10, “ContentType”: “Document”, “Created”: “2023-06-12T14:38:47Z”, “CreatedById”: 16, “Modified”: “2023-08-17T11:01:23Z”, “ModifiedById”: 61, “CopySource”: null, “ApprovalStatus”: “0”, “Path”: “/sites/ITDataGovernanceWorkgroup/Yardi/Integration”, “CheckedOutToId”: null, “VirusStatus”: “43845”, “IsCurrentVersion”: true, “Owshiddenversion”: 6, “Version”: “4.0” }, …. ], “effectiveIntegrationRuntime”: “AutoResolveIntegrationRuntime (Canada Central)”, “durationInQueue”: { “integrationRuntimeQueue”: 0 } }

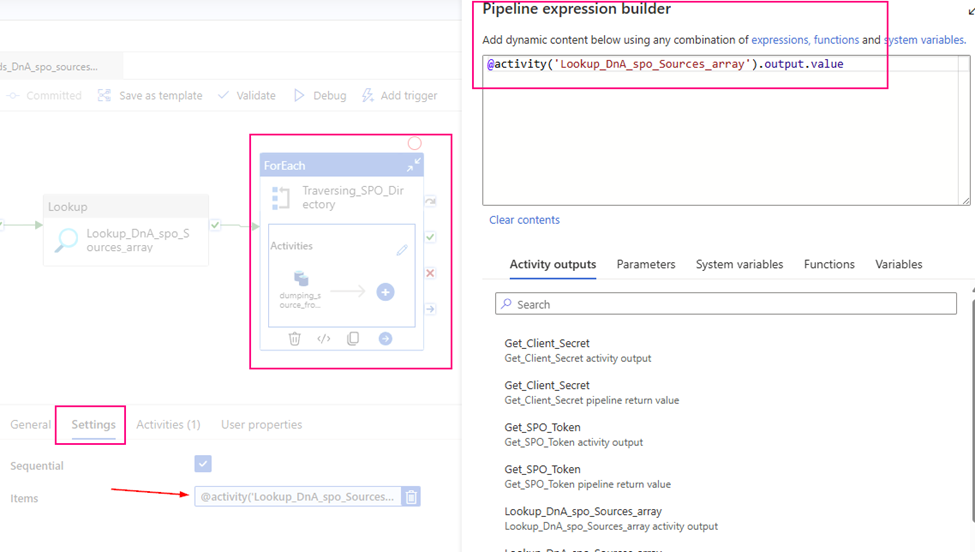

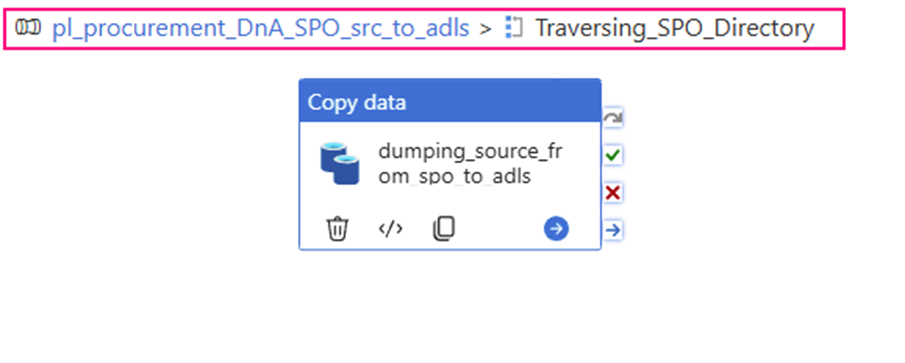

Step 5:

For each travers the interest item list (source data) to copy from SharePoint site

Using up stream lookup (“Lookup_DnA_spo_Sources_array”) output item array to provide its inside copy activity to use.

Items: @activity(‘Lookup_DnA_spo_Sources_array’).output.value

Step 6

Copy those interest datasets from SharePoint online site one by one

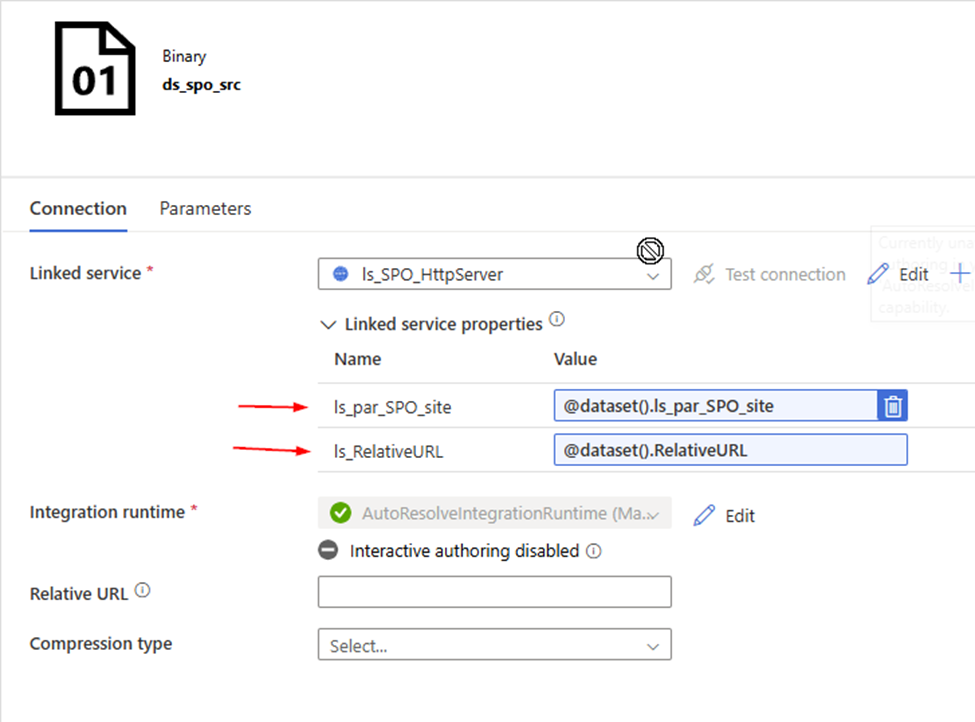

1. Create a HTTP type linked Service, called “ls_SPO_HttpServer” and parameteriz it.

- Base URL:

Mine looks this:

@{concat( ‘https://<your domain>.sharepoint.com/sites/’ ,linkedService().ls_par_SPO_site ,’/_api/web/GetFileByServerRelativeUrl(”’ ,linkedService().ls_RelativeURL ,”’)/$value’ )}

- Parameter:

ls_par_SPO_site

ls_RelativeURL

The value of ‘GetFileByServerRelativeUrl’ is our key point of work. It points to the specific URL location of the dataset. In fact, all upstream work efforts are aimed at generating specific content for this link item!

Cheers, we are almost there!

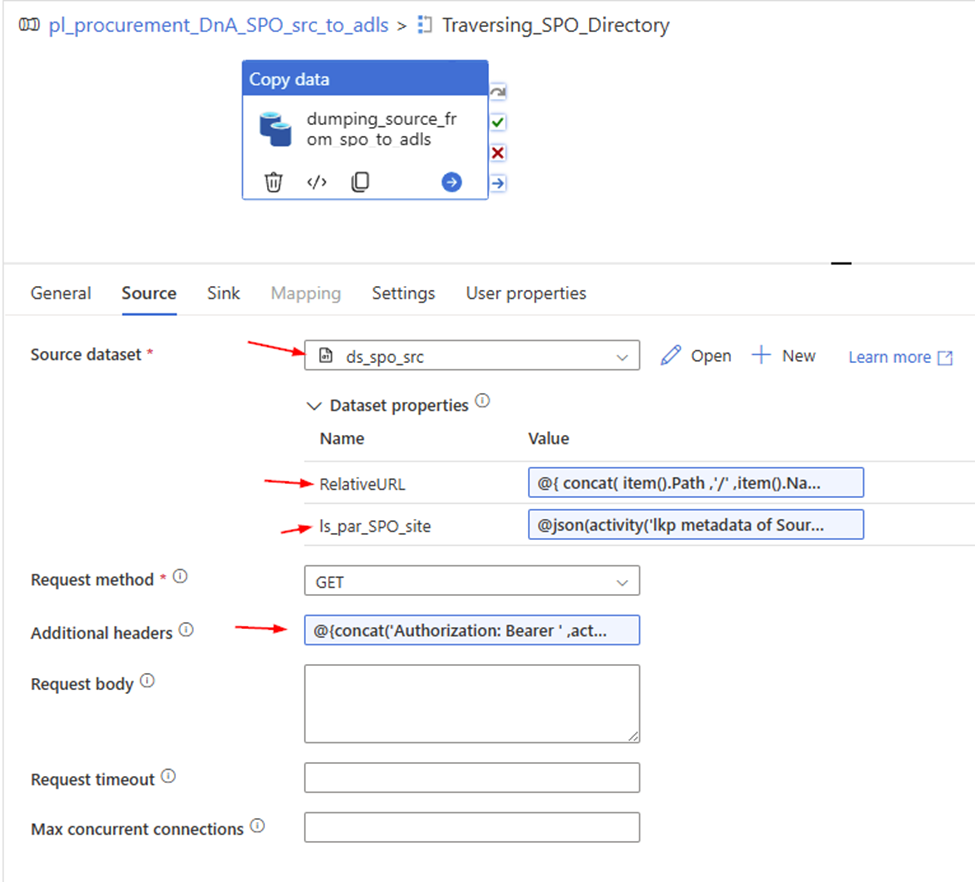

2. Create a Http Binary source dataset , called “ds_spo_src”, Parameterize it

- Parameter:

RelativeURL

ls_par_SPO_site

- Linked Service: ls_SPO_HttpServer , we just created.

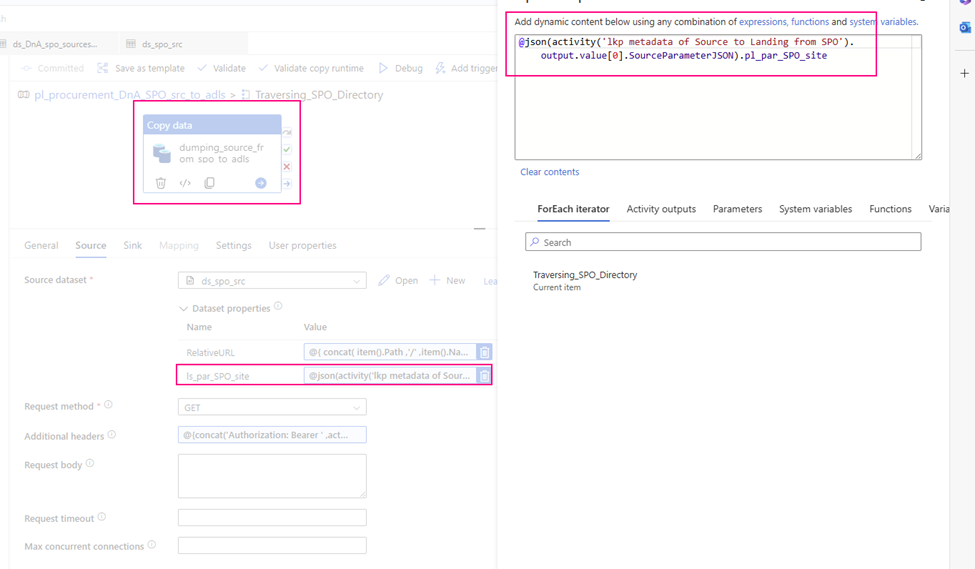

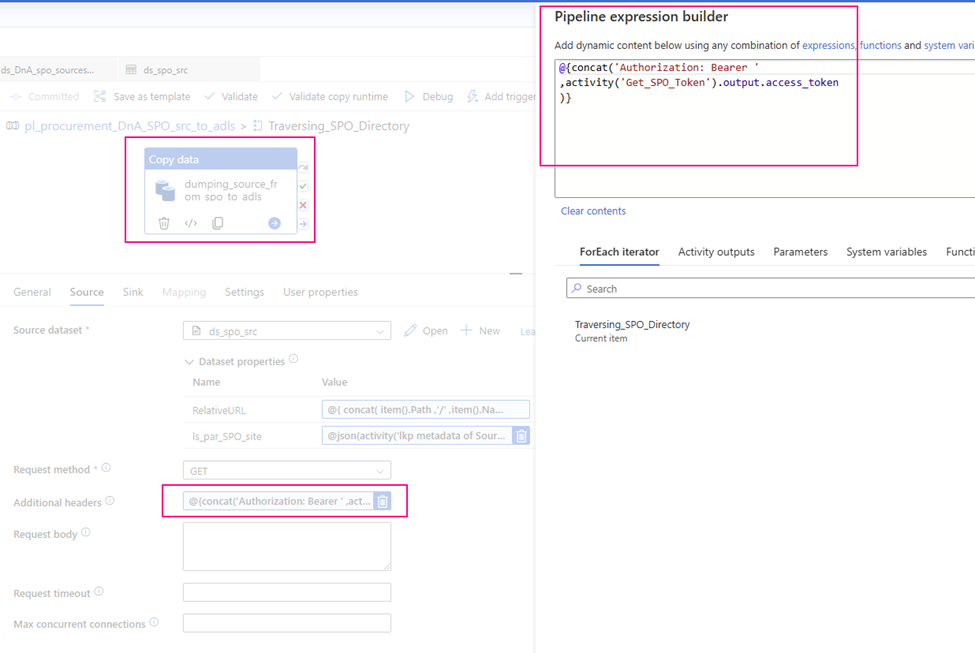

3. Configure copy activity’s “Source”

- Request method: GET

- Source dataset: ds_spo_src

RelativeURL:

@{ concat( item().Path ,’/’ ,item().Name ) }

ls_par_SPO_site:

@json(activity(‘lkp metadata of Source to Landing from SPO’).output.value[0].SourceParameterJSON).pl_par_SPO_site

- Additional header:

@{concat(‘Authorization: Bearer ‘, activity(‘<Web-activity-name>’).output.access_token)}

which uses the Bearer token generated by the upstream Web activity as authorization header.

Mine looks:

@{concat(‘Authorization: Bearer ‘ ,activity(‘Get_SPO_Token’).output.access_token )}

Caution: after ‘Bearer’ HAS a space!

Entire Source configuration looks:

4. Configure copy activity’s “sink”

Our aim is incrementally ingesting source data from SharePoint sink to ADLS. Sink side is normal process.

Let’s go.

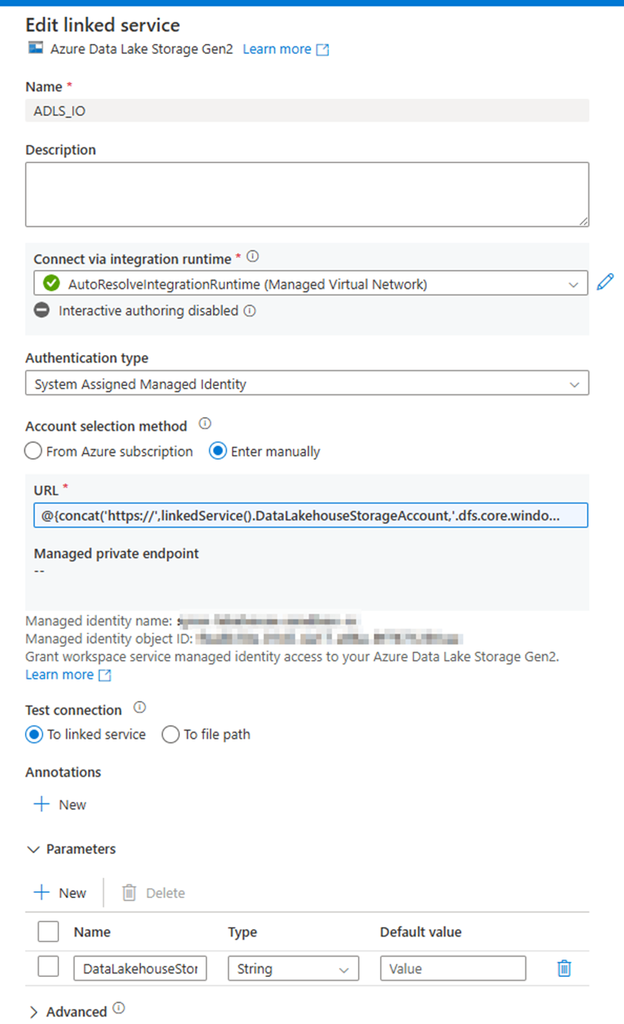

1. Create an ADLS Gen2 Linked service, called “ADLS_IO”, parameterize it.

Parameter: DataLakehouseStorageAccount

URL:

@{concat(‘https://’,linkedService().DataLakehouseStorageAccount,’.dfs.core.windows.net/’)}

2. Create an ADLS Gen2 Binary type dataset by using the above linked service (“ADLS_IO”), called “ds_adls_dst” and parameterize it.

- Parameter:

storage_Account

ds_par_dstpath

ds_par_dst_filename

Looks like:

3. Configure copy activity sink

Dataset: ds_adls_dst

Fill in parameters

storage_Account:

@json(activity(‘lkp metadata of Source to Landing from SPO’).output.value[0].SourceParameterJSON).pl_adls_storage_account

ds_par_dstpath:

@json(activity(‘lkp metadata of Source to Landing from SPO’).output.value[0].SourceParameterJSON).pl_par_ADLS_dst_Path

ds_par_dst_filename

@{ replace( concat( ‘rundate=’ , formatDateTime(convertTimeZone(utcNow(),’UTC’ ,’Eastern Standard Time’ ),’yyyy-MM-dd’) , item().path , ‘/’ , item().Name ) , ‘/sites’, ” ) }

The entire Sink configuration looks like:

That’s all for copy activity configuration.

Conclusion

Automatedly ingest source data from SharePoint online site to other storage is frequently requirement.

The key steps are:

Azure environment side:

Register application at Azure Entra ID, get

- Tenant ID

- Application ID (Client ID)

- Client Secret value

SharePoint side:

Grant the application to access SPO Azure Data Factory or Synapse Analytics:

Azure Data Factory or Synapse Analytics side:

1. Request SPO access token,

Send to : https://accounts.accesscontrol.windows.net/[Tenant-ID]/tokens/OAuth/2

Body POST

grant_type=client_credentials&client_id=[Client-ID]@[Tenant-ID]&client_secret=[Client-Secret]&resource=00000003-0000-0ff1-ce00-000000000000/[Tenant-Name].sharepoint.com@[Tenant-ID]

Heads with : Content-Type: application/x-www-form-urlencoded

2. Http linked Service point to SharePoint site

https://[sharepoint-domain-name].sharepoint.com/sites/[sharepoint-site]/_api/web/GetFileByServerRelativeUrl(‘/sites/[sharepoint-site]/[relative-path-to-file]’)/$value.

Authentication type: Anonymous

dataset use GET method

Additional header:

@{concat(‘Authorization: Bearer ‘, activity(‘<Web-activity-name>’).output.access_token)}

Pay attention to add a space after ‘Bearer’

Well, we have finished all. Please do not hesitate to contact me if you have any questions at william . chen@mainri.ca

(remove all space from the email account 😊)

Appendix:

1. Microsoft: Copy data from SharePoint Online List by using Azure Data Factory or Azure Synapse Analytics

2. Microsoft: Register an application with the Microsoft identity platform