We can mount Azure Data Lake Storage (ADLS), Azure Blob Storage, or other compatible storage to Databricks using dbutils.fs.mount(), with either an account key or a SAS token for authentication.

mount()

dbutils.fs.help(“mount”)

Here’s the general syntax:

dbutils.fs.mount(

source = "<storage-url>",

mount_point = "/mnt/<mount-name>",

extra_configs = {"<conf-key>":dbutils.secrets.get(scope="<scope-name>", key="<key-name>")})

<storage-url>

Blob:

storage-url = f"wasbs://{container_name}@{ storage_account_name.blob.core.windows.net"

Adls:

storage-url = f"abfss://{container_name}@{storage_account_name}.dfs.core.windows.net/"

<conf-key>

Blob:

conf-key = f"fs.azure.account.key.{storage_account_name}.blob.core.windows.net"

Adls:

conf-key = f"fs.azure.account.key.{storage_account_name}.dfs.core.windows.net"

List Mounts:

dbutils.fs.help(“mounts”)

To check all mounted points, you can use:

dbutils.fs.mounts()

unmount()

dbutils.fs.help(“unmount”)

dbutils.fs.unmount("/mnt/<mount-name>")

refreshMounts()

in cluster to refresh their mount cache ensuring they receive the most recent information.

dbutils.fs.help(“refreshMounts”)

dbutils.fs.refreshMounts()

updateMount()

dbutils.fs.updateMount(

source = "<new-storage-url>",

mount_point = "/mnt/<existing-mount-point>",

extra_configs = {"<conf-key>":dbutils.secrets.get(scope="<scope-name>",

key="<key-name>")})

Mount storage

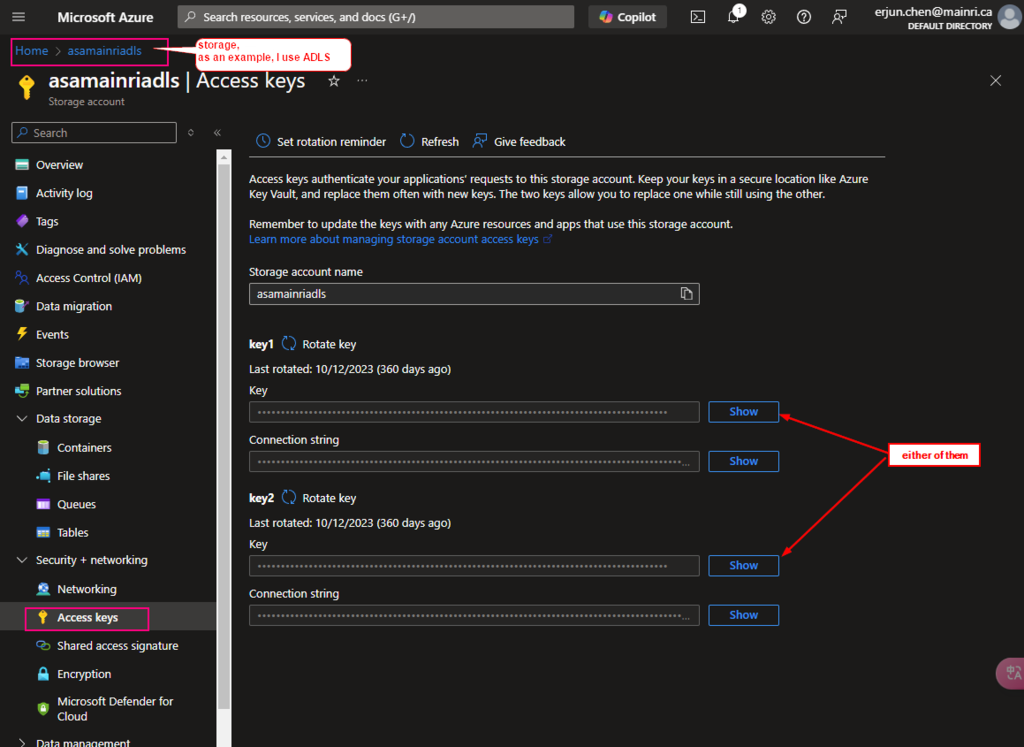

You can get the access key from

Azure Portal > storage > security + networking

e.g.

Mounting an Azure Data Lake (adls) Gen2 Storage to DBFS

Set up your storage account details:

- Storage URL: Use the appropriate URL for your data, e.g.,

abfss://<file-system>@<storage-account>.dfs.core.windows.net/ for ADLS Gen2. - Mount point: Choose a directory in the Databricks file system /mnt/ to mount the storage.

- Extra configs: You usually provide your credentials here, often through a secret scope.

Mount the ADLS storage:

storage_account_name = "<your-storage-account-name>"

container_name = "<your-container-name>"

mount_point = "/mnt/<your-mount-name>"

# Use a secret scope to retrieve the account key

configs = {"fs.azure.account.key." + storage_account_name + ".dfs.core.windows.net": dbutils.secrets.get(scope = "<scope-name>", key = "<key-name>")}

# Perform the mount

dbutils.fs.mount(

source = f"abfss://{container_name}@{storage_account_name}.dfs.core.windows.net/",

mount_point = mount_point,

extra_configs = configs)

Mount Azure Blob Storage to DBFS

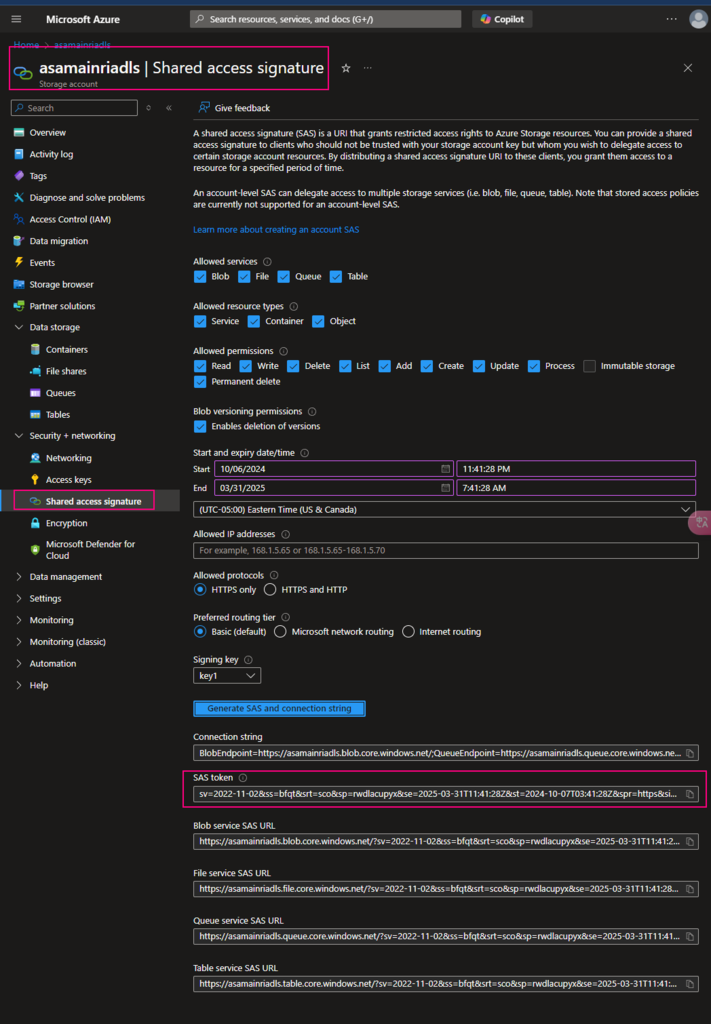

We can mount Azure Blob Storage either by Account Key or SAS key.

dbutils.fs.mount(

Source=”wasbs://<contain-name>@<storage-account-name>.blob.core.windows.net”,

Mount_point = “/mnt/<mount-name>”,

Extra_configs = {“<conf-key>”:” account-key”}

)

In Case of account key, <conf-key> is

fs.azure.account.key.<storage-account-name>.blob.vore.windows.net

In case of SAS (shared access signature) key , <conf-key> is

fs.azure.sas.<container-name>.<storage-account-name>.blob.core.windows.net

Please do not hesitate to contact me if you have any questions at William . chen @ mainri.ca

(remove all space from the email account 😊)