In Azure Databricks, logging is crucial for monitoring, debugging, and auditing your notebooks, jobs, and applications. Since Databricks runs on a distributed architecture and utilizes standard Python, you can use familiar Python logging tools, along with features specific to the Databricks environment like Spark logging and MLflow tracking.

Python’s logging module provides a versatile logging system for messages of different severity levels and controls their presentation. To get started with the logging module, you need to import it to your program first, as shown below:

import logging

logging.debug("A debug message")

logging.info("An info message")

logging.warning("A warning message")

logging.error("An error message")

logging.critical("A critical message")

log levels in Python

Log levels define the severity of the event that is being logged. For example a message logged at the INFO level indicates a normal and expected event, while one that is logged at the ERROR level signifies that some unexpected error has occurred.

Each log level in Python is associated with a number (from 10 to 50) and has a corresponding module-level method in the logging module as demonstrated in the previous example. The available log levels in the logging module are listed below in increasing order of severity:

Logging Level Quick Reference

| Level | Meaning | When to Use |

|---|---|---|

DEBUG (10) | detailed debugging info | development |

INFO (20) | normal messages | job progress |

WARNING (30) | minor issue | non-critical issues |

ERROR (40) | failure | recoverable errors |

CRITICAL (50) | system failure | stop the job |

It’s important to always use the most appropriate log level so that you can quickly find the information you need. For instance, logging a message at the WARNING level will help you find potential problems that need to be investigated, while logging at the ERROR or CRITICAL level helps you discover problems that need to be rectified immediately.

By default, the logging module will only produce records for events that have been logged at a severity level of WARNING and above.

Logging basic configuration

Ensure to place the call to logging.basicConfig() before any methods such as info(), warning(), and others are used. It should also be called once as it is a one-off configuration facility. If called multiple times, only the first one will have an effect.

logging.basicConfig() example

import logging

from datetime import datetime

## set date time format

run_id = datetime.now().strftime("%Y%m%d_%H%M%S")

## Output WITH writing to a file (Databricks log file)

log_file = f"/dbfs/tmp/my_pipeline/logs/run_{run_id}.log"

## start configuing

logging.basicConfig(

filename=log_file,

level=logging.INFO,

format="%(asctime)s — %(levelname)s — %(message)s",

)

## creates (or retrieves) a named logger that you will use to write log messages.

logger = logging.getLogger("pipeline")

## The string "pipeline" is just a name for the logger. It can be anything:

## "etl"

## "my_app"

## "sales_job"

## "abc123"simple logging example

import logging

from datetime import datetime

run_id = datetime.now().strftime("%Y%m%d_%H%M%S")

log_file = f"/dbfs/tmp/my_pipeline/logs/run_{run_id}.log"

logging.basicConfig(

filename=log_file,

level=logging.INFO,

format="%(asctime)s — %(levelname)s — %(message)s",

)

logger = logging.getLogger("pipeline")

logger.info("=== Pipeline Started ===")

try:

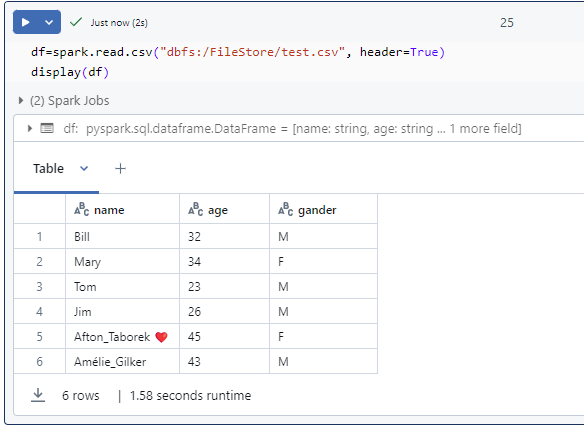

logger.info("Step 1: Read data")

df = spark.read.csv(...)

logger.info("Step 2: Transform")

df2 = df.filter(...)

logger.info("Step 3: Write output")

df2.write.format("delta").save(...)

logger.info("=== Pipeline Completed Successfully ===")

except Exception as e:

logger.error(f"Pipeline Failed: {e}")

raise

the log output looks this:

2025-11-21 15:05:01,112 – INFO – === Pipeline Started ===

2025-11-21 15:05:01,213 – INFO – Step 1: Read data

2025-11-21 15:05:01,315 – INFO – Step 2: Transform

2025-11-21 15:05:01,417 – INFO – Step 3: Write output

2025-11-21 15:05:01,519 – INFO – === Pipeline Completed Successfully ===

Log Rotation (Daily Files)

Log Rotation means: Your log file does NOT grow forever. Instead, it automatically creates a new log file each day (or each hour, week, etc.), and keeps only a certain number of old files.

This prevents:

- huge log files

- storage overflow

- long-term disk growth

- difficult debugging

- slow I/O

It is very common in production systems (Databricks, Linux, App Servers, Databases). Without log rotation → 1 file becomes huge, With daily rotation:

my_log.log (today)

my_log.log.2025-11-24 (yesterday)

my_log.log.2025-11-23

my_log.log.2025-11-22

Python code that does Log Rotation

import logging

from logging.handlers import TimedRotatingFileHandler

handler = TimedRotatingFileHandler(

"/dbfs/Volumes/logs/my_log.log",

when="midnight", # rotate every day

interval=1, # 1 day

backupCount=30 # keep last 30 days

)

formatter = logging.Formatter("%(asctime)s - %(levelname)s - %(message)s")

handler.setFormatter(formatter)

logger = logging.getLogger("rotating_logger")

logger.setLevel(logging.INFO)

logger.addHandler(handler)

logger.info("Log rotation enabled")

Hourly Log Rotation

handler = TimedRotatingFileHandler(

"/dbfs/tmp/mylogs/hourly.log",

when="H", # rotate every hour

interval=1,

backupCount=24 # keep 24 hours

)

Size-Based Log Rotation

handler = RotatingFileHandler(

"/dbfs/tmp/mylogs/size.log",

maxBytes=5 * 1024 * 1024, # 5 MB

backupCount=5 # keep 5 old files

)

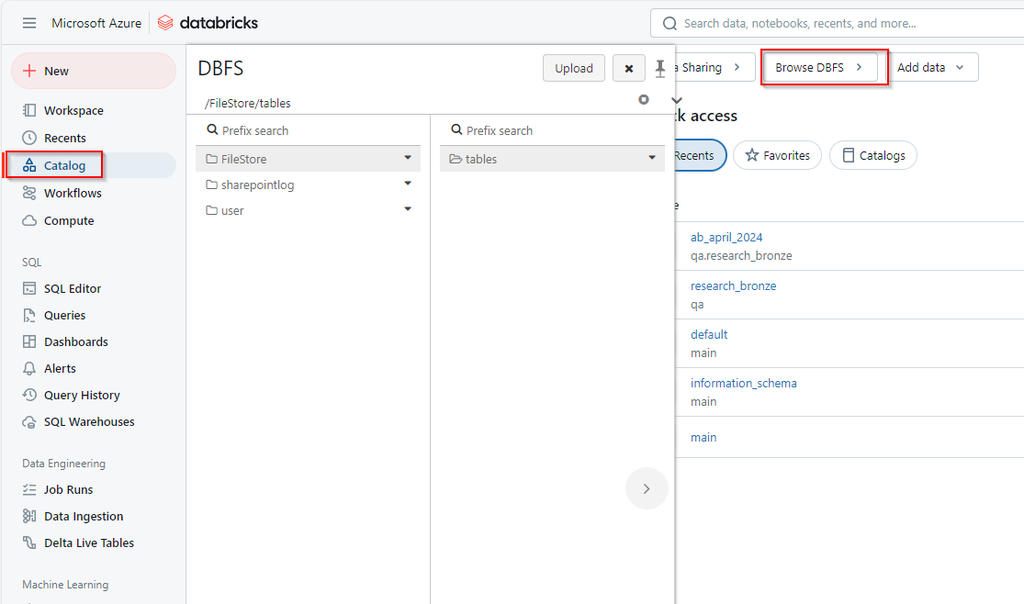

Logging to Unity Catalog Volume (BEST PRACTICE)

Create a volume first (once):

CREATE VOLUME IF NOT EXISTS my_catalog.my_schema.logs;use it in code:

from logger_setup import get_logger

logger = get_logger(

app_name="customer_etl",

log_path="/dbfs/Volumes/my_catalog/my_schema/logs/customer_etl"

)

logger.info("Starting ETL pipeline")

Databricks ETL Logging Template (Production-Ready)

Features

- Writes logs to file

- Uses daily rotation (keeps 30 days)

- Logs INFO, ERROR, stack traces

- Works in notebooks + Jobs

- Fully reusable

1. Create the logger (ready for copy & paste)

“File Version”

import logging

from logging.handlers import TimedRotatingFileHandler

def get_logger(name="etl"):

log_path = "/dbfs/tmp/logs/pipeline.log" # or a UC Volume

handler = TimedRotatingFileHandler(

log_path,

when="midnight",

interval=1,

backupCount=30

)

formatter = logging.Formatter(

"%(asctime)s - %(levelname)s - %(name)s - %(message)s"

)

handler.setFormatter(formatter)

logger = logging.getLogger(name)

logger.setLevel(logging.INFO)

# Prevent duplicate handlers in notebook re-runs

if not logger.handlers:

logger.addHandler(handler)

return logger

logger = get_logger("my_pipeline")

logger.info("Logger initialized")

Unity Catalog Volume version

# If the folder doesn't exist:

dbutils.fs.mkdirs("/Volumes/my_catalog/my_schema/logs")

/Volumes/my_catalog/my_schema/logs/

# Create logger pointing to the UC Volume

import logging

from logging.handlers import TimedRotatingFileHandler

def get_logger(name="etl"):

log_path = "/Volumes/my_catalog/my_schema/logs/pipeline.log"

handler = TimedRotatingFileHandler(

filename=log_path,

when="midnight",

interval=1,

backupCount=30 # keep last 30 days

)

formatter = logging.Formatter(

"%(asctime)s - %(levelname)s - %(name)s - %(message)s"

)

handler.setFormatter(formatter)

logger = logging.getLogger(name)

logger.setLevel(logging.INFO)

# Prevent duplicate handlers when re-running notebook cells

if not logger.handlers:

logger.addHandler(handler)

return logger

logger = get_logger("my_pipeline")

logger.info("Logger initialized")

2. Use the logger inside your ETL

logger.info("=== ETL START ===")

try:

logger.info("Step 1: Read data")

df = spark.read.csv("/mnt/raw/data.csv")

logger.info("Step 2: Transform")

df2 = df.filter("value > 0")

logger.info("Step 3: Write output")

df2.write.format("delta").mode("overwrite").save("/mnt/curated/data")

logger.info("=== ETL COMPLETED ===")

except Exception as e:

logger.error(f"ETL FAILED: {e}", exc_info=True)

raise

Resulting log file (example)

The output file looks like this:

2025-11-21 15:12:01,233 - INFO - my_pipeline - === ETL START ===

2025-11-21 15:12:01,415 - INFO - my_pipeline - Step 1: Read data

2025-11-21 15:12:01,512 - INFO - my_pipeline - Step 2: Transform

2025-11-21 15:12:01,660 - INFO - my_pipeline - Step 3: Write output

2025-11-21 15:12:01,780 - INFO - my_pipeline - === ETL COMPLETED ===

If error:

2025-11-21 15:15:44,812 - ERROR - my_pipeline - ETL FAILED: File not found

Traceback (most recent call last):

...Best Practices for Logging in Python

By utilizing logs, developers can easily monitor, debug, and identify patterns that can inform product decisions, ensure that the logs generated are informative, actionable, and scalable.

- Avoid the root logger

it is recommended to create a logger for each module or component in an application. - Centralize your logging configuration

Python module that will contain all the logging configuration code. - Use correct log levels

- Write meaningful log messages

%vs f-strings for string formatting in logs- Logging using a structured format (JSON)

- Include timestamps and ensure consistent formatting

- Keep sensitive information out of logs

- Rotate your log files

- Centralize your logs in one place

Conclusion

To achieve the best logging practices, it is important to use appropriate log levels and message formats, and implement proper error handling and exception logging. Additionally, you should consider implementing log rotation and retention policies to ensure that your logs are properly managed and archived.